This article explores AWS Lambda Managed Instances as an alternative compute option for steady-state, high-volume serverless workloads. Using a telemetry collection pipeline as the example, it proposes an architecture where API Gateway directly enqueues events into SQS, and a Managed Instances Lambda processes messages in batches. The post walks through a small CDK-based PoC and highlights practical gotchas—VPC networking, memory-to-vCPU ratios, capacity provider association behavior, and NAT vs VPC endpoints—so you can evaluate Managed Instances with realistic constraints. It closes with a simple recommendation: keep traditional Lambda as the default for bursty/unpredictable workloads, and consider Managed Instances when your workers are effectively “always on” and you can size capacity around a steady baseline.

When architecting a serverless solution, one common question/concern is “Lambda is very costly at scale”. And that can be true depending on the traffic and flow of the service, and what the function is doing

One thing I love about Lambda is that it gives you the ability to move fast and keep operational overhead at a minimum. A simple execution model, clean and easy integrations with other AWS services and a powerful scaling model

But there are also tradeoffs that become evident when traffic grows or becomes "steady state". Resulting in a Lambda function that is "always on"

Recently, AWS announced "Lambda Managed Instances". It caught my attention because it seems to fill the gap: "steady-state" workflows with Lambda's dev experience.

It's not a replacement for traditional Lambda, I see it more like "stay inside Lambda’s flow with a capacity style compute and scaling model", which is great for high volume and steady traffic.

In this post, I analyze a concrete use case where I think Lambda managed instances fits as a compute choice. I also go over a small POC where I explore this new offering using CDK to deploy it.

Use Case

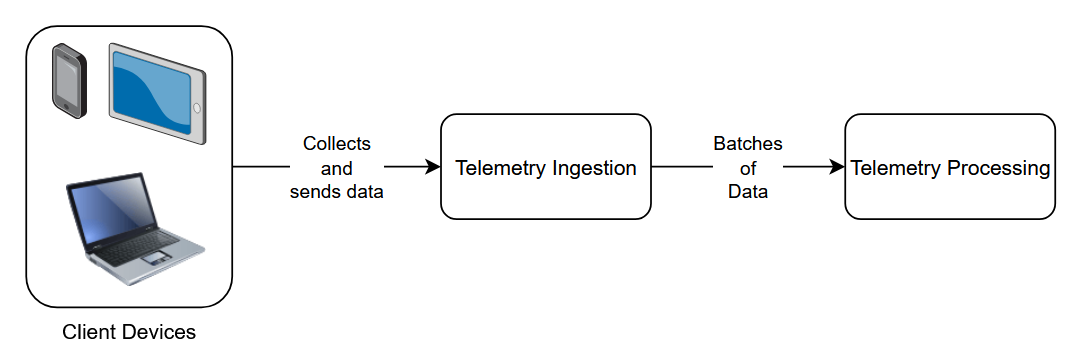

For the use case, let's dive into a "collect telemetry" scenario, where multiple client devices using one or different apps send telemetry data that can result in a lot of volume. Things like clicks, screen views, custom events, metrics and so on may be collected and sent over to a "Telemetry Ingestion" service which in turn can send the data in batches to a "Telemetry Processing" service

A simplified diagram for the use case is shown as follows:

Since a single user that's navigating an app in a device can generate a lot of events, if we think at scale, the data can be quite a lot. A good candidate for using Lambda Managed Instance for this scenario could be the "Telemetry Processing":

- Would be an "always-on" service. Even if usage varies during a day, there's usually steady traffic 24/7

- Spikes in traffic like marketing campaigns can be handled gracefully by Lambda's Capacity Provider

- Ingestion needs to stay thin. So an approach that accepts telemetry data fast works here. SQS can help in batching the data so that the Lambda Managed Instance can process multiple telemetry data events at a time

The combination of "steady traffic" + "high volume" + "traffic spikes" makes this a good scenario to try out Lambda Managed Instances.

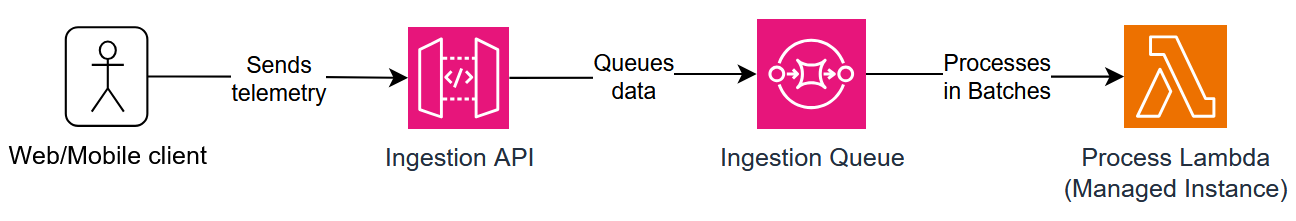

Architecture

So as described in the previous section, for the "Telemetry Ingestion" service a thin layer would be needed in order to accept the telemetry data and batch it. For that, using API Gateway as entry point and a SQS queue to help batch the messages works like a charm. Using a direct integration between API Gateway and SQS it's possible to avoid another compute inbetween those two.

For the "Telemetry Processing", since we're using an SQS queue for the ingestion, the batching is controlled nicely. A DLQ can be introduced to handle failed messages that the Lambda can't process, so that those messages are not automatically lost. For the compute, it's possible to use Lambda with the "Managed Instance" variant, as it would fit nicely in the use case and would be more cost effective at scale because the queue is frequently non-empty.

Let's now see how to implement a sample POC of this architecture, focusing on the "Lambda Managed Instance" part, which is the telemetry processing piece.

POC

Implementation using an IaC framework like AWS CDK is straight forward. The stack is composed of one HTTP endpoint for the API Gateway, one SQS queue + DLQ and one Lambda function running on Managed Instances (ec2) with a Capacity Provider defined.

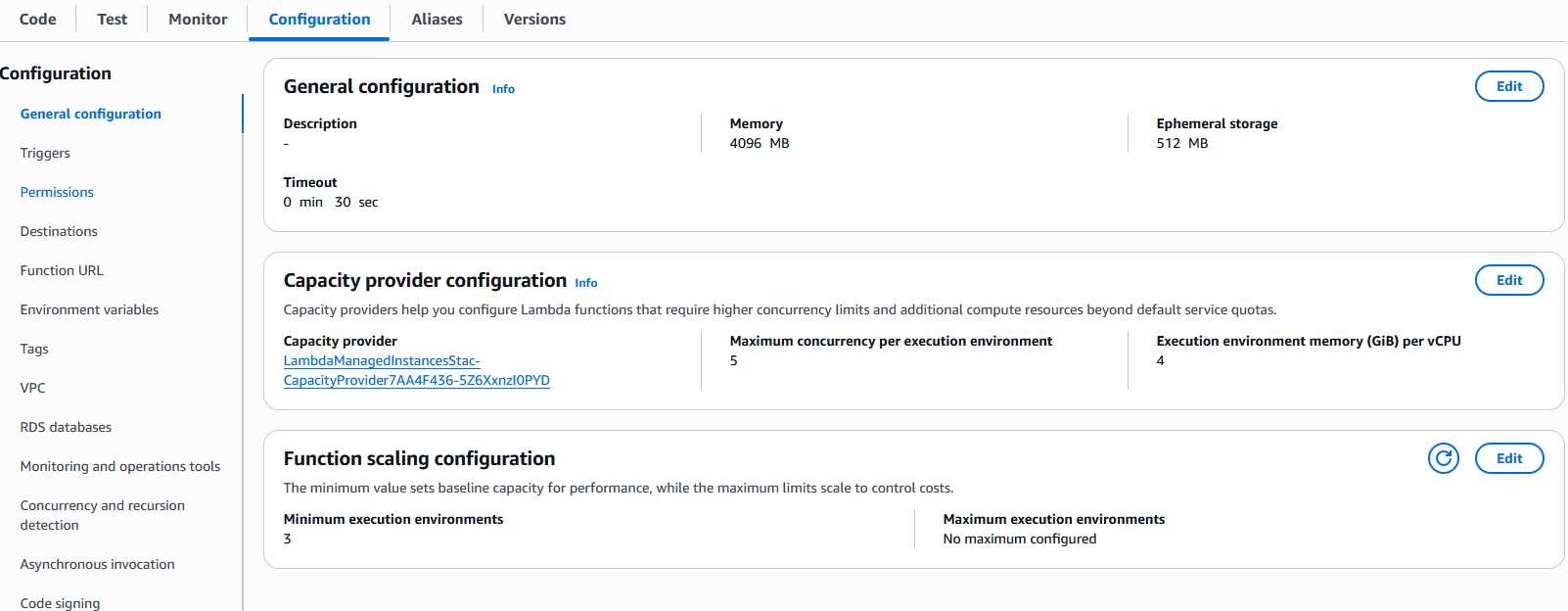

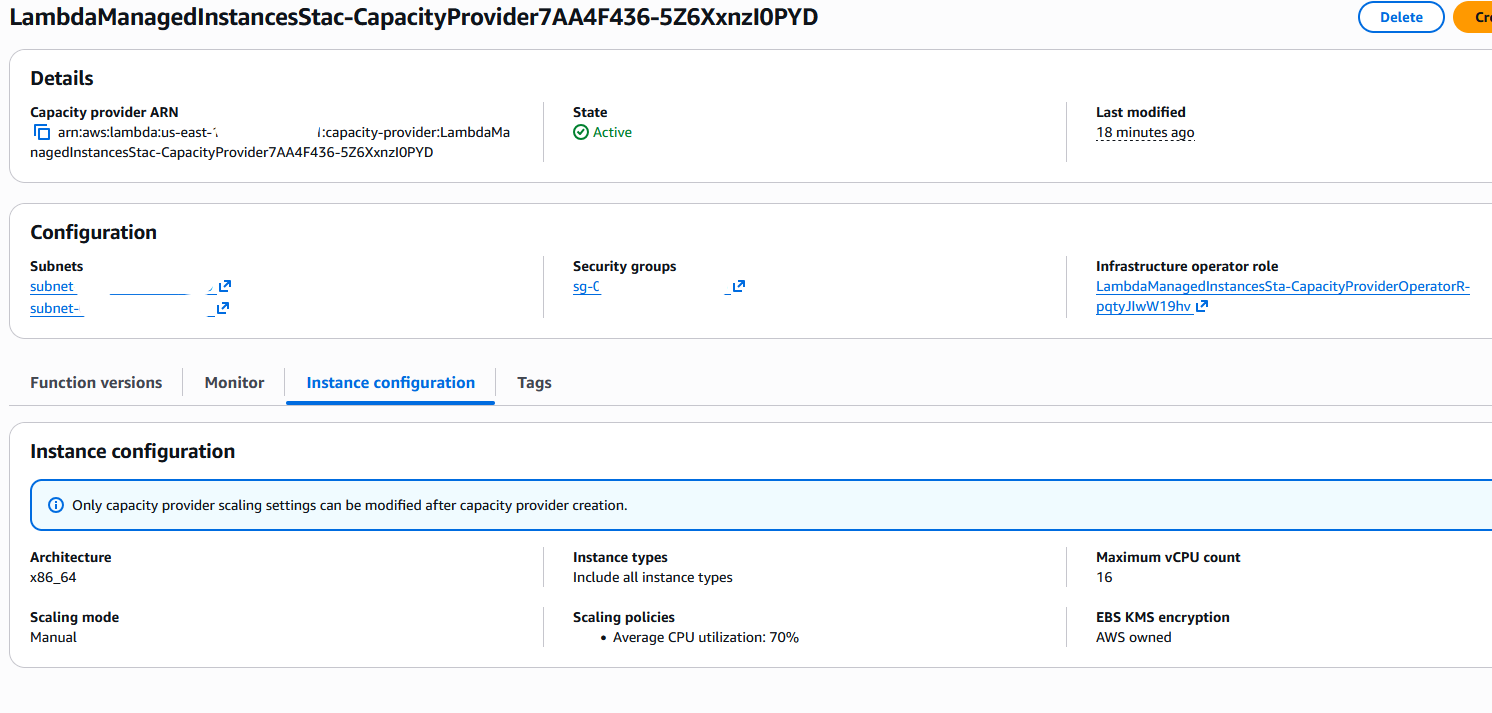

Some things are different when defining traditional Lambda from Managed Instances. Some gotchas I learnt during the implementation:

- The EC2 instances that are spun up will be inside the VPC in private subnets, so all the networking configurations need to be defined in CDK, as well, in order for the Lambda to work properly.

- The memory-to-CPU ratio must match your function memory. When attaching a function to a Capacity Provider, a supported memory-to-CPU ratio must be chosen, which as of the writing of this article is 2GB, 4GB or 8GB per vCPU. If the function's memory is smaller, deployment will fail.

- Attaching a function to a Capacity Provider is one-way. As of the writing of the article, it's not possible to "go back to traditional Lambda" by removing the association. To go back, a new function must be created.

- For this POC, adding a NAT gateway works. However, because of pricing and cost, in a real scenario it might be better to consider VPC endpoints for SQS or CloudWatch instead.

For the complete code implementation of the POC, please see the following GitHub Repo.

Some screenshots of the deployed stack:

Conclusion

I don’t think Lambda Managed Instances will do much to change how people are using Lambda. Traditional Lambda is still the best default for unpredictable workload. But for steady-state systems where functions end up "always-on", Lambda Managed Instances are a great option to keep the Lambda ecosystem while moving to a capacity-oriented model.

The telemetry use case is a good example of when Lambda Managed Instances fits in nicely. Using API gateway + SQS queue as a direct integration, we keep the telemetry ingestion simple and avoid unnecessary compute. The queue provides buffering and batching which a Lambda using "Managed Instances" can consume from.

Some gotchas I found when developing the POC for this use case are things like VPC configuration, Capacity Provider and Lambda configuration and the fact that a function configured with a Capacity Provider can't "remove the Capacity Provider association" to go back to traditional Lambda.

If considering to implement Lambda Managed Instances in a real production scenario, I would measure traffic to be able to take the decision based on that. Some measures could be queue depth/arrival rate, processing throughput, and cost under traditional Lambda (optionally considering provisioned concurrency). Then do a comparison exercise using Managed Instances with a configuration sized for steady traffic.

References:

- Official AWS blog announcement: https://aws.amazon.com/blogs/aws/introducing-aws-lambda-managed-instances-serverless-simplicity-with-ec2-flexibility/

- Serverless Land “Lambda managed instances hello world” https://serverlessland.com/patterns/lambda-managed-instances-cdk?ref=search

- Lambda managed instances best practices https://docs.aws.amazon.com/lambda/latest/dg/lambda-managed-instances-best-practices.html

- CDK Lambda Capacity Provider documentation https://docs.aws.amazon.com/cdk/api/v2/docs/aws-cdk-lib.aws_lambda.CapacityProvider.html

%20(1).svg)

.svg)