The Problem

Dead‑letter queues are supposed to protect your system. They catch failed messages so that the rest of your architecture can keep running safely.

In practice, though, they often become a backlog of repetitive decisions. A message fails, an engineer opens the DLQ, reads the error, and decides whether to retry, suppress, or escalate. The next day, the same decision shows up again, only with a slightly different payload.

It is tempting to “just add more retries” or let the Lambda auto‑reprocess DLQ messages. But that simply moves risk into production. Some failures are unsafe to retry. Business rule violations, for example, are deterministic; redriving them blindly doesn’t fix anything. It just repeats the failure and can create unintended side effects.

So the real challenge is not automation.

It's safe automation.

The Approach: AI Suggests, Guardrails Decide

The solution works because it separates three concerns that are often mixed together: classification, safety enforcement, and execution. The model helps interpret failures, deterministic guardrails decide what is allowed, and the workflow enforces the final action.

That separation is deliberate. The model can suggest an action, but it can never execute one on its own.

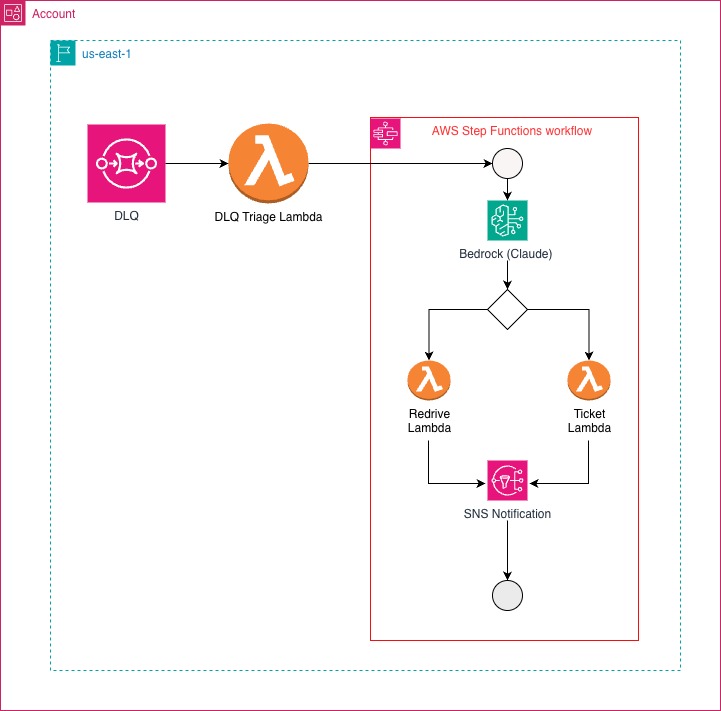

In practice, the system combines Amazon SQS for the DLQ source, AWS Lambda for normalization and guardrails, Amazon Bedrock (Claude) for AI‑assisted classification, AWS Step Functions for deterministic orchestration, and Amazon SNS for notifications.

Architecture Overview

The architecture is simple by design. The model provides a recommendation, deterministic guardrails decide what is allowed, and the workflow enforces the action.

Canonical DLQ Schema

When a message lands in the DLQ, a Lambda function first normalizes it into a canonical structure. That step matters because DLQ payloads are rarely consistent across services.

A simplified schema looks like this:

{

"correlationId": "0194e12c-13c4-7358-bf00-d40b0d69497b",

"failureCategory": "DOWNSTREAM_TIMEOUT",

"errorMessage": "Timeout after 3 retries",

"timestamp": "2025-01-15T10:36:00Z",

"stateAtFailure": "FAILED",

"redriveAttempts": 0

}Example SQS -> canonical field mapping:

Body(JSON) ->payload.originalRecordMessageId->metadata.sqsMessageIdAttributes.ApproximateReceiveCount->redriveAttemptsAttributes.SentTimestamp->timestampMessageAttributes.*->metadata.messageAttributes

Standardization comes first. Classification only works when the input is predictable.

AI‑Assisted Classification

Once normalized, the message is sent to Amazon Bedrock. Claude analyzes the failure and returns structured output describing the failure category, a recommended action, a confidence score, and a short explanation.

To keep that output safe and machine‑reliable, it must conform to a strict schema:

class TriageOutput(BaseModel):

category: str

recommended_action: Literal["REDRIVE", "TICKET"]

confidence: confloat(ge=0.0, le=1.0)

summary: str

reasoning: strIf the model fails, returns malformed JSON, or exceeds limits, the system defaults to creating a ticket. The fallback is deterministic. If the AI layer is uncertain, the system escalates.

If the model recommends REDRIVE the objective is to re-publish the original payload to the source queue/topic. The DLQ message is still available during processing and is deleted only after the handler succeeds. If the message has already been deleted or expired, recovery must be out-of-band (logs or upstream systems), using correlationId to locate the original event.

Guardrails: Where Safety Lives

Models are probabilistic. Operations must be deterministic.

Before any action is taken, guardrails validate whether redriving is safe. They check message age, retry count, confidence threshold, and allow listed failure categories.

A simplified example looks like this:

if message_age_days > 2:

allow_redrive = False

if redrive_attempts >= 2:

allow_redrive = False

if confidence < 0.8:

allow_redrive = FalseOnly when the model recommends REDRIVE and the guardrails approve it does the system proceed. Otherwise, the message is escalated. The model suggests and guardrails authorize.

Deterministic Orchestration with Step Functions

All routing happens inside AWS Step Functions so that the workflow remains explicit and observable.

A simplified decision block looks like this:

decision = sfn.Choice(self, "Decision")

decision.when(

sfn.Condition.and_(

sfn.Condition.string_equals("$.llm.recommended_action", "REDRIVE"),

sfn.Condition.boolean_equals("$.guardrails.allow_redrive", True),

),

redrive_task,

).otherwise(ticket_task)This is where probabilistic output meets deterministic execution.

When This Pattern Makes Sense

This approach works best when:

- DLQ traffic is significant

- Transient failures are common

- Engineers keep repeating the same triage decisions

- MTTR is higher than it should be

It is less useful when:

- DLQ volume is low

- Regulations require human approval

- Failure logic is deeply domain-specific and hard to generalize

Operational Impact

In practice, this pattern:

- Reduces manual triage by 60–80%

- Improves MTTR for transient failures

- Maintains full auditability

- Prevents unsafe retries

And because it’s serverless, cost stays low compared to the manual effort it replaces.

The Bigger Idea

This is not about replacing engineers. It is about removing repetitive reasoning, preserving deterministic safety, making automation observable, and encapsulating decisions in explicit workflows.

The most important component is not the LLM. It is the boundary around the LLM. That boundary is what turns experimentation into production architecture.

Talk to Us

If you want a production-grade implementation for your environment, we can design the guardrails, integrate with your ticketing system, and operate the workflow end to end.

%20(1).svg)

.svg)