Have you ever wondered why your RAG responses sound weak, why the citations feel thin, or why the answer misses the point even when the model stays grounded?

Before you blame the model or rebuild the stack, consider a simpler explanation. RAG responses are often evidence failures, rather than generation failures. These issues showed up while building a transcript-grounded RAG system for real user questions, where accuracy, coverage, and trust mattered.

The model can stay well grounded and still disappoint because:

- The right chunk never arrived

- The retrieved chunks were too narrow

- The question was phrased differently from the source material

- The final context was too noisy to support a complete answer

That became obvious while building a transcript-grounded assistant for a client's IMG residency-prep course, designed to help international medical graduates navigate the US residency application process.

The narrow questions worked early, but one broad question exposed the real weakness:

What does this course promise to teach besides mindset?The answer came back grounded, but thin. The content existed in the transcripts, but it never made it into the prompt.

That question led to a much more useful mental model:

RAG qualiIy is the result of several smaller decisions working together, and many of them happen before the model ever sees a word.

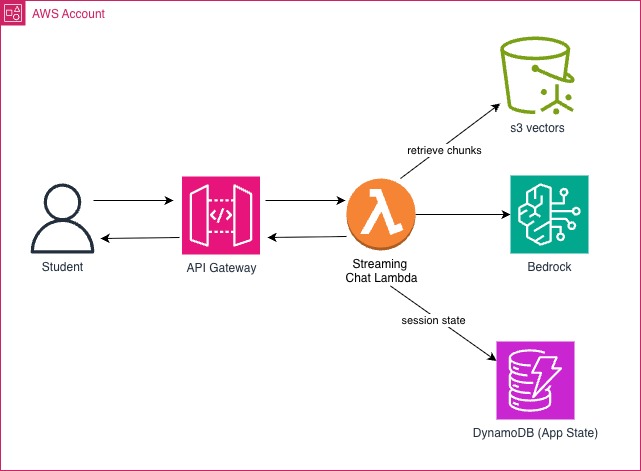

How RAG Works at a High Level

Retrieval-augmented generation has a simple goal: answer questions using external source material instead of relying only on the model's internal knowledge.

In practice, that means turning documents into retrievable chunks, finding the most relevant evidence for a question, and then passing that evidence into the model so the answer stays grounded.

A typical RAG pipeline has three broad phases:

- Content preparation: clean, chunk, and embed the source material

- Retrieval: search for relevant chunks, optionally rewrite the query, and rerank results

- Generation: assemble the final context, generate the answer, and return citations

That flow sounds clean. However, in practice, each stage is a place where quality can quietly degrade, and most teams only notice when the answers start disappointing users.

Here are the seven places the pipeline above most commonly breaks down, and what to do about each one.

1. You Are Testing the Easy Questions

Narrow, direct questions often work early because the source material contains exact semantic matches.

Questions like:

What is an IMG?Who is this course for?

These were easy to answer because the lesson structure already supported them.

The real gaps showed up when users asked broad, indirect, or section-spanning questions.

This question below did not map neatly to one paragraph in the transcript:

What does this course promise to teach besides mindset?The true answer was distributed across personal statements, letters of recommendation, IMG-friendly programs, US clinical experience, ERAS, and USMLE preparation, without ever repeating the phrase "besides mindset."

Without an eval set that included questions like that, the system would have appeared to perform much better than it actually was.

Fix: Build an eval set of 10 to 20 questions that represent your real failure modes, not just the ones that already work. Otherwise, you end up optimizing for the path that already works. A targeted eval set gives you a stable baseline for measuring whether changes to chunking, query rewrite, retrieval, or reranking are actually improving the failure mode you care about.

2. Your Chunks Are Split at the Wrong Boundaries

Bad chunking makes retrieval unpredictable regardless of what you do downstream.

The most common failure modes are familiar:

- Chunks are too large

- Chunks are too small

- Chunks are split at arbitrary token boundaries

- Chunks lose the document structure that gives them meaning

For transcript-heavy content, topic and section boundaries mattered more than neat token budgets alone.

In this project, FAQ-style lessons chunked naturally by question. Broader lesson content needed section-aware chunking based on topic transitions in the transcript itself:

SECTION_BREAK_RULES = (

("what you are going to get from this course", "Course Promise and Outcomes"),

("i am going to show you a 7-step pathway", "Seven-Step Pathway and Mindset"),

("then i will show you what makes a good personal statement", "Application Materials"),

("i will also walk you through how to find img-friendly programs", "Program Search and Clinical Experience"),

("then i will show you how to fill out your eras application", "ERAS and USMLE Preparation"),

)That one decision made retrieval much easier to reason about and debug.

Fix: Chunk around meaning first and token budgets second. Interpretable chunks are debuggable chunks. When chunk boundaries reflect the document's structure, retrieval failures become easier to diagnose and reranking has better evidence to work with.

3. You Are Not Using Metadata

Metadata is not decoration.

It is how retrieval stays aligned to the correct scope.

Every chunk in this system carries metadata that suppors both authorization and citation:

def build_vector_metadata(chunk: dict[str, Any]) -> dict[str, Any]:

return {

"courseId": chunk["courseId"],

"lessonId": chunk["lessonId"],

"lessonTitle": chunk["lessonTitle"],

"sectionTitle": chunk["sectionTitle"],

"chunkIndex": chunk["chunkIndex"],

"sourceType": chunk["sourceType"],

"text": chunk["text"],

}Every retrieval query is then filtered by courseId before anything else runs:

def build_course_filter(course_id: str) -> dict[str, Any]:

return {"courseId": {"$eq": course_id}}That may sound basic, but it is exactly the kind of control that separates a safe system from a leaky one.

Fix: Attach courseId, lessonId, lessonTitle, sectionTitle, and sourceType to every chunk, and filter by scope on every query.

That keeps results aligned to the right course and makes downstream authorization and citation behavior much easier to trust.

4. Vague Questions Go Into Retrieval Unchanged

Users rarely phrase questions the way documents are written. hat mismatch is one of the most common causes of weak retrieval.

To reduce that gap, this system rewrites vague or indirect questions before embedding:

def build_query_rewrite_system_prompt() -> str:

return "\\n".join([

"You rewrite student questions for transcript retrieval.",

"Your goal is to improve recall without changing the user's intent.",

"Expand vague or contrastive wording into retrieval-friendly concepts.",

"Do not answer the question.",

'Return JSON only: {"rewrittenQuery":"string","reason":"string"}',

])What is an IMG? stayed essentially the same.

What does this course promise to teach besides mindset? became a more retrieval-friendly question that explicitly named the course topics the user was actually asking about.

Fix: Rewrite vague or indirect questions before the embedding step. It preserves grounding while reducing the distance between user phrasing and source phrasing.

It also increases the chance that the right sections enter the candidate set before reranking narrows the field.

5. Broad Questions Get One Retrieval Pass

Some questions are too broad to trust to one retrieval query. One embedding cannot reliably cover a question that spans multiple sections of a lesson.

For broad curriculum-style questions, this system fans out into multiple subqueries and merges the result sets before reranking:

def build_retrieval_queries(*, original_query, rewritten_query, course_id) -> list[str]:

queries = [rewritten_query]

if not should_expand_known_topics(question_text=original_query):

return queries

for topic_group in build_topic_query_groups(topics=expanded_topics):

queries.append(f"{course_name} course topics: {', '.join(topic_group)}")

return deduped_queriesThe merge layer uses round-robin interleaving so one subquery does not dominate the candidate set.

This improved recall for section-spanning questions by bringing the missing detailed chunks into the retrieval set, even when one broad query would not.

Fix: For broad questions, fan out into multiple subqueries, merge the result sets, then rerank. That gives broad questions a wider evidence pool without forcing one vague embedding to carry the whole request.

6. You Are Skipping Reranking

Vector retrieval is a broad filter because it preserves recall, but it is usually not precise enough to define final prompt context by itself.

The pattern that worked here was simple:

- Retrieve broadly

- Rerank narrowly

response = client.rerank(

queries=[{"type": "TEXT", "textQuery": {"text": rerank_query_text}}],

sources=build_rerank_sources(retrieval_results),

rerankingConfiguration={

"type": "BEDROCK_RERANKING_MODEL",

"bedrockRerankingConfiguration": {

"numberOfResults": min(rerank_top_n, len(retrieval_results)),

"modelConfiguration": {"modelArn": resolved_rerank_model_arn},

},

},

)This reduces prompt noise and makes it much easier to debug where quality changes:

- Raw retrieval results

- Reranked results

- Final cited results

If the right chunk appears in retrieval but disappears before generation, that is a different problem than if it never appeared at all. In practice, the most useful debugging view is not a polished image but a direct comparison of the raw top-K retrieval list and the reranked top-N list for the same query. If the useful chunk is already present before reranking but disappears afterward, the problem is not retrieval recall. It is the narrowing step between candidate retrieval and final prompt assembly.

Fix: Retrieve a wider top-K, rerank to a narrower final set, and compare the two lists directly. This is the step that turns a plausible candidate set into a tighter, more answer-ready context window.

One more detail mattered after reranking: prompt assembly order. Once the right chunks existed, dumping them into the prompt in arbitrary score order still produced uneven answers. Putting the lesson-level overview first and the supporting sections after it in curriculum order made the final context easier for the model to follow and easier to debug.

7. Broad Questions Need a Summary Layer

One of the most effective changes in this project was adding a synthetic lesson-level summary chunk for broad overview questions.

That chunk did not invent new content. It summarized real lesson sections in a retrieval-friendly format:

def build_curriculum_overview_text(*, lesson_title, sections) -> str | None:

body_lines = [

f"This lesson explains what {lesson_title} teaches besides mindset for IMGs.",

"Beyond mindset, it covers " + join_series(overview_topics) + ".",

"Curriculum topics:",

*[f"- {topic}" for topic in overview_topics],

]

return assemble_text(CURRICULUM_OVERVIEW_SECTION_TITLE, body_lines)That fixed the broad curriculum question, but it created a new problem.

The best citation became:

Lesson Curriculum OverviewCitation Mapping Still Matters

That was acceptable inside the retrieval layer, but it was not acceptable for the user experience.

The final refinement was to store which real transcript sections the summary represented and surface those in the citation instead:

"summaryOfSections": [

{"sectionTitle": "Seven-Step Pathway and Mindset"},

{"sectionTitle": "Application Materials"},

{"sectionTitle": "Program Search and Clinical Experience"},

{"sectionTitle": "ERAS and USMLE Preparation"}

]This is where many RAG systems still feel untrustworthy. Good RAG is about whether the user can follow the path from answer back to source.

Fix: If you use synthetic summary chunks, map the citation display back to the real source sections they represent. That lets you optimize retrieval for broad questions without asking users to trust internal summary labels they never saw in the source.

What Changed After These Fixes

After applying these changes:

- Broad questions that previously returned partial answers began retrieving complete, multi-section context

- Citation quality improved from generic summary labels to traceable transcript sections

- Retrieval debugging became much more deterministic instead of trial-and-error

- Overall answer quality improved without changing the model or fine-tuning

These changes improved the flow of evidence through the system, it wasn't just adding complexity for the sake of it.

Where This Pattern Pays Off

This pattern earns its keep when:

- Users ask overview questions such as "what does this course cover?" instead of single-fact lookups

- The answer is distributed across multiple sections that do not mirror the user's phrasing

- Citation labels need to map back to headings the user actually recognizes

- You want to debug retrieval separately from generation

It is usually not worth the extra summary layer when the source already contains good editorial overviews or when most questions map cleanly to one source span.

The Real Problem Was Never the Model

Most weak RAG systems are not weak because the model is bad, rather they are weak because retrieval, context assembly, and citation design are not doing enough work.

That is the architect's version of the problem.

When to Rebuild

If you work through these seven areas and the system still cannot meet your quality bar, then a more custom architecture becomes a legitimate conversation. By that point, you have identified real limits in control, observability, or retrieval behavior rather than just reacting to weak answers. These seven changes will usually improve answer quality faster than a rebuild.

The model can only be as good as the evidence you give it. Fix the evidence first.

%20(1).svg)

.svg)